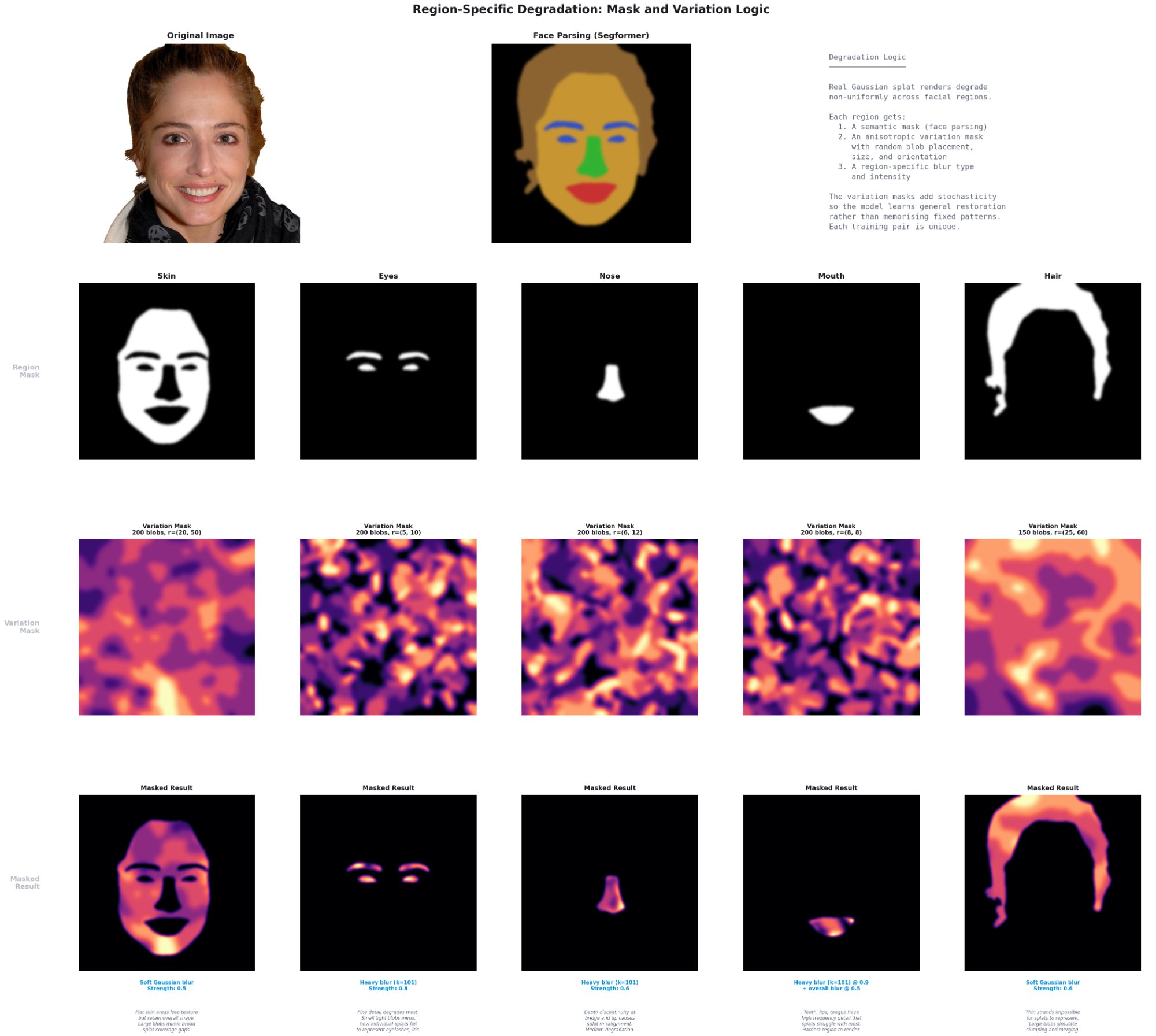

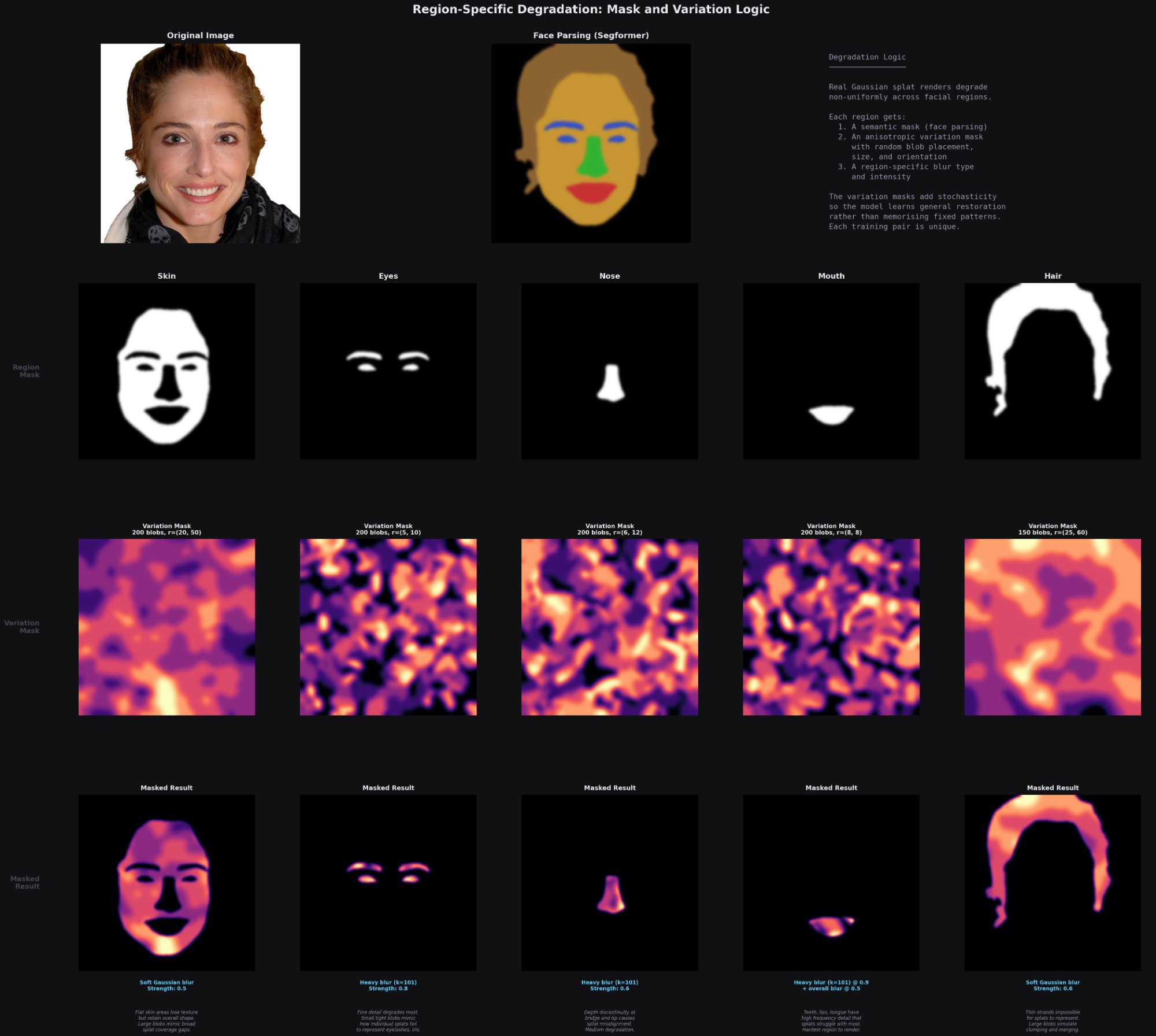

Region-Specific Degradation

A Segformer face parser segments each image into regions, and each region receives degradation calibrated to how splats typically fail there. Anisotropic variation masks keep the degradation patchy rather than uniform, mirroring how individual 3D Gaussians project differently by position, orientation, and scale.

The mouth region gets the heaviest degradation (0.9) because of depth discontinuities between teeth, lips, and tongue. Eyes receive 0.7 due to fine detail loss from competing splats in small areas. Hair gets 0.6 from strand clumping caused by splat merging. Nose and skin receive progressively less.